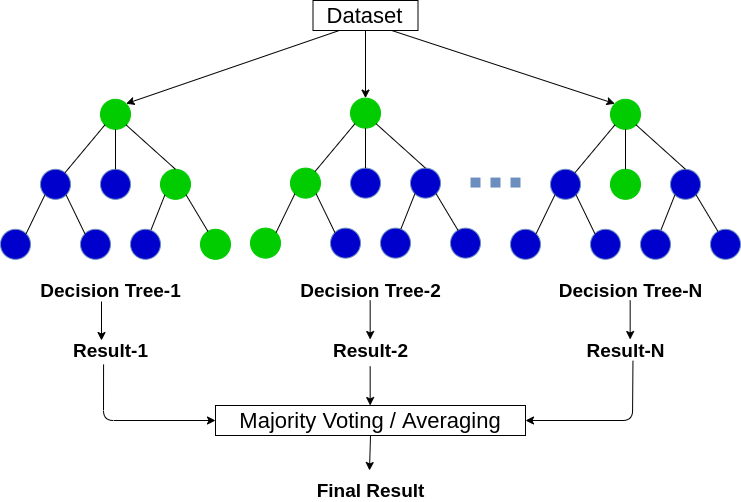

Given a data set D1 (n rows and p columns), it creates a new dataset (D2) by sampling n cases at random with replacement from the original data. First, it uses the Bagging (Bootstrap Aggregating) algorithm to create random samples.But since the data set available is limited to us, we can use resampling techniques like bagging and random forest to generate more data.īuilding many decision trees results in a forest. We can overcome the variance problem by using more data for training. "High Variance" means getting high prediction error on unseen data.

Higher the purity, lesser the uncertainity to make the decision.īut a decision tree suffers from high variance. In a nutshell, every tree attempts to create rules in such a way that the resultant terminal nodes could be as pure as possible. The entropy is minimum when the probability is 0 or 1.Įntropy = - p(a)*log(p(a)) - p(b)*log(p(b)) For p(X=a)=0.5 or p(X=b)=0.5 means, a new observation has a 50%-50% chance of getting classified in either classes. For a binary class (a,b), the formula to calculate it is shown below. Entropy - Entropy is a measure of node impurity.For a split to take place, the Gini index for a child node should be less than that for the parent node. If the Gini index takes on a smaller value, it suggests that the node is pure. Gini Index - It's a measure of node purity.In classification trees (where the output is predicted using mode of observations in the terminal nodes), the splitting decision is based on the following methods:.We call it "greedy" because the algorithm cares to make the best split at the current step rather than saving a split for better results on future nodes. The tree splitting takes a top-down greedy approach, also known as recursive binary splitting. The variable which leads to the greatest possible reduction in RSS is chosen as the root node. In regression trees (where the output is predicted using the mean of observations in the terminal nodes), the splitting decision is based on minimizing RSS.A variable at root node is also seen as the most important variable in the data set.ģ, But how is this homogeneity or pureness determined? In other words, how does the tree decide at which variable to split? In the image above, the variable X1 resulted in highest homogeneity in child nodes, hence it became the root node. These rules are determined by a variable's contribution to the homogenity or pureness of the resultant child nodes (X2,X3).Ģ. These rules divide the data set into distinct and non-overlapping regions. Given a data frame (n x p), a tree stratifies or partitions the data based on rules (if-else). To understand the working of a random forest, it's crucial that you understand a tree. How does it work? (Decision Tree, Random Forest) Trivia: The random Forest algorithm was created by Leo Brieman and Adele Cutler in 2001. In classification problems, the dependent variable is categorical. In regression problems, the dependent variable is continuous. Random Forest can be used to solve regression and classification problems. This is how we use ensemble techniques in our daily life too. 8 of them said " the movie is fantastic." Since the majority is in favor, you decide to watch the movie. You ask 10 people who have watched the movie. Ensembling is nothing but a combination of weak learners (individual trees) to produce a strong learner. The method of combining trees is known as an ensemble method. Random forest is a tree-based algorithm which involves building several trees (decision trees), then combining their output to improve generalization ability of the model. Advantages and Disadvantages of Random Forest.What is the difference between Bagging and Random Forest?.How does it work? (Decision Tree, Random Forest).Also, you'll learn the techniques I've used to improve model accuracy from ~82% to 86%. For ease of understanding, I've kept the explanation simple yet enriching. I've used MLR, data.table packages to implement bagging, and random forest with parameter tuning in R. In this article, I'll explain the complete concept of random forest and bagging. Most often, I've seen people getting confused in bagging and random forest. Its ability to solve-both regression and classification problems along with robustness to correlated features and variable importance plot gives us enough head start to solve various problems. If you are new to machine learning, the random forest algorithm should be on your tips. In fact, the easiest part of machine learning is coding. However, I've seen people using random forest as a black box model i.e., they don't understand what's happening beneath the code. With its built-in ensembling capacity, the task of building a decent generalized model (on any dataset) gets much easier. Random Forest is one of the most versatile machine learning algorithms available today. Not for the sake of nature, but for solving problems too!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed